Why Website Migrations Require Extreme Precision

Website migrations are among the most technically demanding and high-risk SEO initiatives. Whether the project involves a domain change, CMS switch, structural redesign, HTTPS implementation, or a full replatforming, even minor oversights can result in indexation problems, ranking volatility, and significant traffic loss. That’s why having a structured website migration checklist – supported by deep crawl and log analysis – is essential for SEO professionals and developers alike.

The Zero Traffic Loss Migration Checklist created by JetOctopus presents a systematic, data-first framework for executing migrations safely. Instead of relying on assumptions, the guide emphasizes measurable baselines, controlled implementation, and post-launch validation. Most importantly, it explains how a high-performance enterprise crawler plays a central role throughout the entire website migration process, ensuring that no critical SEO signal is overlooked.

Below is a comprehensive summary of the checklist, with a clear focus on how JetOctopus supports every phase of a successful SEO website migration checklist.

A site migration process simultaneously impacts multiple technical layers:

- URL structures and hierarchies

- Internal linking architecture

- Redirect rules

- Canonicalization logic

- Robots directives

- XML sitemaps

- Crawl budget allocation

- Server response behavior

Any inconsistency between old and new versions of the site can disrupt how search engines interpret and prioritize pages. Without a reliable dataset of the current site and real-time monitoring during rollout, traffic volatility becomes almost inevitable.

This is why the checklist is structured around three pillars:

- Full pre-migration benchmarking

- Controlled implementation

- Continuous post-launch monitoring

JetOctopus functions as the technical backbone across all three.

The Zero Traffic Loss Website Migration Checklist

Below is a structured breakdown of the core website migration steps, combined with the specific ways JetOctopus supports SEO and development teams.

1. Pre-Migration Crawl & Benchmarking

Before any code is deployed, teams must capture a complete snapshot of the current site. This baseline ensures that nothing valuable disappears during the transition.

Key actions in this phase include:

- Crawling all existing URLs to build a comprehensive URL inventory.

- Identifying indexable, non-indexable, and canonicalized pages.

- Exporting metadata (titles, descriptions, headings).

- Mapping internal link structures and crawl depth.

- Detecting orphan pages.

- Auditing status codes and redirect chains.

- Identifying top-performing URLs that require careful migration handling.

How JetOctopus helps:

- Performs ultra-fast crawling at scale, even for enterprise-level sites.

- Segments pages by indexability, directives, and status codes.

- Visualizes site structure and internal linking patterns.

- Enables bulk export for redirect mapping.

- Provides data comparisons for pre- and post-migration validation.

For developers, this phase creates a clear technical specification. For SEOs, it establishes the foundation of a reliable seo migration plan.

2. Redirect Mapping & Validation

Redirect implementation is one of the most critical elements of any site migration checklist. Even small errors can fragment link equity or create crawl inefficiencies.

The checklist stresses the importance of:

- Creating a precise one-to-one redirect map.

- Avoiding redirect chains and loops.

- Using 301 redirects for permanent changes.

- Ensuring legacy URLs resolve correctly.

- Preventing redirect-to-redirect sequences.

- Testing redirect logic in staging before launch.

How JetOctopus supports this stage:

- Bulk-analyzes redirect chains across thousands of URLs.

- Flags incorrect status codes (302 vs. 301).

- Detects broken redirects and unexpected 404 responses.

- Validates canonical alignment after redirects.

- Compares crawl data before and after implementation to detect gaps.

This reduces the risk of traffic loss during a website migration project plan and gives developers actionable technical validation.

3. Internal Linking & Architecture Control

During a website migration project, internal links often remain overlooked. Relying on redirects for internal navigation weakens crawl efficiency and wastes crawl budget.

The checklist recommends:

- Updating internal links to point directly to new URLs.

- Maintaining logical crawl depth distribution.

- Avoiding orphaned pages after migration.

- Preserving navigation and breadcrumb structure.

- Checking internal anchor text consistency.

- Ensuring key commercial pages retain strong internal linking signals.

How JetOctopus assists:

- Identifies internal links that still point to legacy URLs.

- Detects orphan pages by comparing crawl data with log files.

- Visualizes internal link equity distribution.

- Measures depth changes before and after migration.

- Highlights structural inconsistencies introduced during redesign.

For technical teams managing large-scale migration website checklist implementations, this structural oversight is invaluable.

4. Indexability & Technical Signal Validation

One of the biggest risks in a website migration seo checklist is unintentionally blocking or deindexing important pages.

The checklist prioritizes validating:

- Robots.txt rules and crawl permissions.

- Meta robots directives (noindex/nofollow).

- Canonical tag accuracy.

- Hreflang consistency for international sites.

- XML sitemap accuracy and freshness.

- Correct server response codes (200, 301).

How JetOctopus strengthens this step:

- Crawls staging environments to detect blocked assets.

- Flags unexpected noindex directives.

- Audits canonical relationships at scale.

- Analyzes hreflang clusters for errors.

- Compares sitemap URLs against crawl results to detect mismatches.

This comprehensive technical validation protects index stability during a seo site migration checklist rollout.

5. Post-Launch Crawl & Log Monitoring

The migration is not complete after launch. The days and weeks following deployment are critical for detecting early warning signals.

The checklist recommends:

- Running an immediate full-site crawl post-launch.

- Comparing new crawl results with baseline data.

- Monitoring 404 errors and redirect inconsistencies.

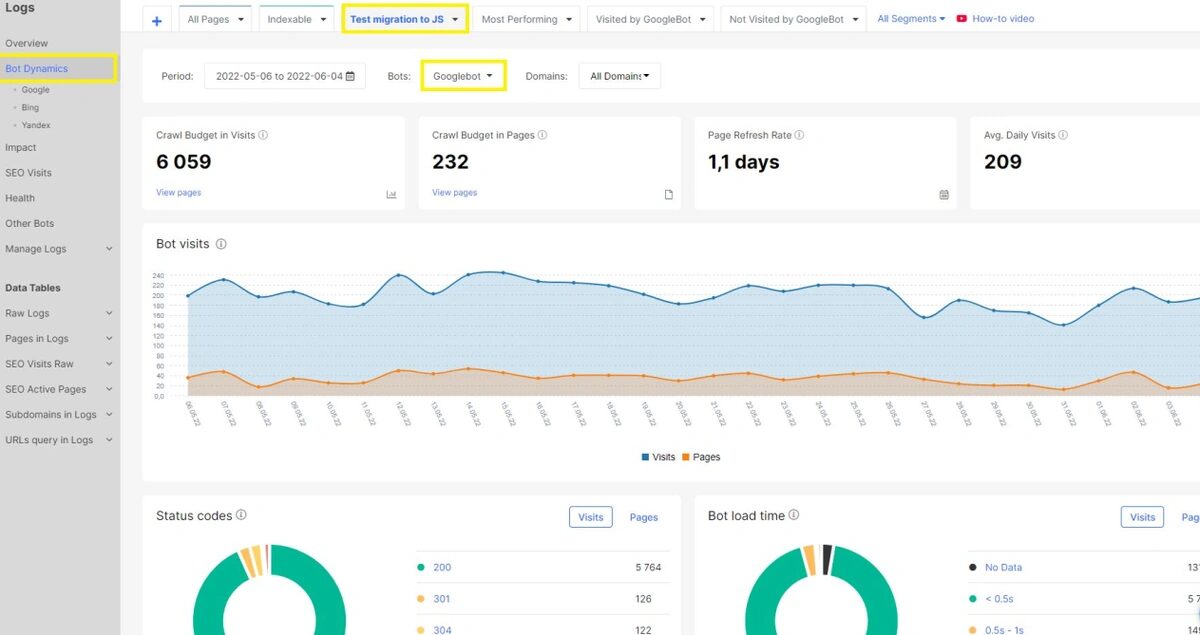

- Analyzing search bot behavior via server logs.

- Identifying crawl budget inefficiencies.

- Tracking structural changes that may affect ranking performance.

How JetOctopus provides an advantage:

- Combines crawl data with log file analysis in one interface.

- Shows how Googlebot interacts with new URLs.

- Highlights high-value pages that bots are ignoring.

- Detects unexpected crawl spikes or drops.

- Enables fast debugging before rankings decline.

This unified crawl + log perspective makes JetOctopus especially powerful during high-stakes website migration steps.

Key Benefits of Using JetOctopus During Website Migrations

Beyond supporting individual checklist items, the platform delivers strategic advantages that improve the overall site migration plan.

Here are the core benefits for SEO professionals and developers:

- Enterprise Scalability. Capable of crawling millions of URLs without compromising performance – essential for large e-commerce or content-heavy platforms.

- Integrated Crawl and Log Analysis. Offers a combined technical and behavioral view of search engine activity, reducing blind spots.

- Before-and-After Comparison. Easily compare datasets to detect missing pages, structural shifts, or redirect errors.

- Advanced Segmentation & Filtering. Analyze URLs by status code, directives, parameters, canonical type, or crawl depth.

- Crawl Budget Optimization. Identify wasted bot activity and ensure priority pages receive adequate crawl attention.

- Developer-Friendly Data Exports. Structured exports simplify communication between SEO and engineering teams.

- Faster Issue Detection. Discover migration-related errors within hours rather than weeks.

- Reduced Traffic Volatility Risk. By validating every technical signal, teams significantly lower the probability of ranking drops.

These capabilities transform a standard website migration guide into a fully measurable and controlled execution framework.

A Data-Driven Approach to Zero Traffic Loss

The core principle of the Zero Traffic Loss methodology is simple: migrations fail not because they are complex, but because they are executed without complete visibility.

A robust checklist for website migration must include:

- Full URL inventory and benchmarking

- Precise redirect mapping

- Internal link restructuring

- Technical signal validation

- Post-launch crawl comparison

- Continuous bot behavior monitoring

JetOctopus supports each of these layers with scalable crawling, advanced segmentation, and integrated log analysis.

For SEO specialists and developers managing a high-impact website migration plan, this level of control is not optional – it is essential.

From Migration Risk to Measurable SEO Control

Website migrations will always carry inherent risk. However, traffic loss is preventable when the process is guided by a structured seo website migration checklist and powered by a reliable technical data platform.

The Zero Traffic Loss Migration Checklist demonstrates how disciplined preparation, systematic validation, and post-launch monitoring can protect organic performance throughout even the most complex website migration project.

By leveraging scalable crawling and real bot behavior analysis, JetOctopus enables teams to move from uncertainty to precision – turning migrations from high-risk events into controlled, measurable SEO operations.